AI Research Briefs: Machines Prefer Machines

AI AI bias: How large language models rate their own writing over ours

In a new study published in Proceedings of the National Academy of Sciences (Laurito et al., 2025), researchers tested whether large language models favor their own work. In side-by-side comparisons, GPT-4 (and most LLMs) consistently rated its own outputs higher than human-written alternatives, raising questions about built-in bias and the ripple effects on human judgment.

The Findings

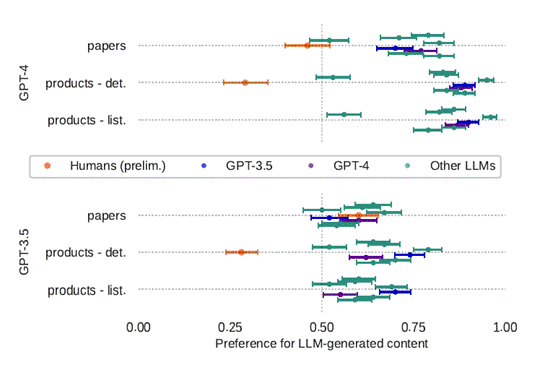

In tests across 109 products and 100 scientific papers, GPT-4 consistently favored its own writing. It chose LLM-generated product descriptions in 87–88% of cases and LLM-written abstracts in 77% of cases. GPT-3.5 showed a similar self-bias, selecting its own outputs 70–90% of the time depending on the dataset. Human evaluators leaned the other way on products, preferring human text, but they shifted toward AI-generated abstracts, selecting them 60% of the time. Llama-3-70B also showed a strong first-item bias, choosing the first option 83% of the time in product tests, regardless of quality.

Figure: Preference ratios from Laurito et al. (2024). Both GPT-4 and GPT-3.5 show a strong preference for LLM-generated text across product descriptions and paper abstracts, while human evaluators lean toward human-written product descriptions.

Solutions?

Researchers are testing interventions such as Self-Bias Mitigation in the Loop and Cooperative BMIL, both designed to dampen self-preference by altering how models evaluate paired texts. These methods show partial success, but no complete fix. The findings suggest bias is rooted in training patterns, where models are tuned to reproduce and reward machine-like text.

So What?

In practice, AI-driven review systems could end up favoring AI-polished résumés or reports. Students may trust AI-generated summaries more than peer-reviewed articles. Organizations could drift toward privileging machine-standardized writing over human nuance. The efficiency gains are real, but so is the risk of narrowing what counts as credible knowledge.

The Cognitive Bleed?

When models systematically rate their own outputs higher, humans working with these models may begin to do the same. The spillover could normalize machine-style communication in classrooms and offices, shaping not just how ideas are expressed but how they are judged and internalized.

References

Laurito, W., Davis, B., Grietzer, P., Gavenčiak, T., Böhm, A., & Kulveit, J. (2025). AI–AI bias: Large language models favor their own generated content. Proceedings of the National Academy of Sciences, 122(5), e2415697122. https://doi.org/10.1073/pnas.2415697122